软件环境:

flume-ng-core-1.4.0-cdh5.0.0

spark-1.2.0-bin-hadoop2.3

流程说明:

- Spark Streaming: 使用spark-streaming-flume_2.10-1.2.0插件,启动一个avro source,用来接收数据,并做相应的处理;

- Flume agent:source监控本地文件系统的一个目录,当文件发生变化时候,由avro sink发送至Spark Streaming的监听端口

Flume配置:

flume-lxw-conf.properties

#-->设置sources名称 agent_lxw.sources = sources1 #--> 设置channel名称 agent_lxw.channels = fileChannel #--> 设置sink 名称 agent_lxw.sinks = sink1 # source 配置 ## 一个自定义的Source,实现类似tail -f 的功能,比exec source更可靠 agent_lxw.sources.sources1.type = org.apache.flume.source.taildirectory.DirectoryTailSource agent_lxw.sources.sources1.dirs = lxwlog ## 监控的目录 agent_lxw.sources.sources1.dirs.lxwlog.path = file:///tmp/lxw-source #监控文件的正则规则,此正则用java的正则 agent_lxw.sources.sources1.dirs.lxwlog.file-pattern = ^lxw_.*log$ agent_lxw.sources.sources1.first-line-pattern = ^(.*)$ agent_lxw.sources.sources1.channels = fileChannel # sink 1 配置 将数据发送至slave004.lxw1234.com的44444端口 agent_lxw.sinks.sink1.type = avro agent_lxw.sinks.sink1.hostname = slave004.lxw1234.com agent_lxw.sinks.sink1.port = 44444 agent_lxw.sinks.sink1.channel = fileChannel agent_lxw.sinks.sink1.batch-size = 500 agent_lxw.sinks.sink1.connect-timeout = 40000 agent_lxw.sinks.sink1.request-timeout = 40000 agent_lxw.channels.fileChannel.type = file #-->检测点文件所存储的目录 agent_lxw.channels.fileChannel.checkpointDir = /tmp/flume/checkpoint/site #-->数据存储所在的目录设置 agent_lxw.channels.fileChannel.dataDirs = /tmp/flume/data/site #-->隧道的最大容量 agent_lxw.channels.fileChannel.capacity = 10000 #-->事务容量的最大值设置 agent_lxw.channels.fileChannel.transactionCapacity = 100

Spark Streaming程序:

Spark_Flume.scala

package com.lxw.test

import org.apache.spark.SparkConf

import org.apache.spark.SparkContext

import org.apache.spark.storage.StorageLevel

import org.apache.spark.streaming.Seconds

import org.apache.spark.streaming.StreamingContext

import org.apache.spark.streaming.flume.FlumeUtils

object Spark_Flume {

def main (args : Array[String]) {

if(args.length < 2) {

println("Usage: Spark_Flume <hostname> <port>")

System.exit(1)

}

val hostname = args(0)

val port = Integer.parseInt(args(1))

val sc = new SparkContext(new SparkConf().setAppName("Spark_Flume"))

val ssc = new StreamingContext(sc, Seconds(10))

val flumeStream = FlumeUtils.createStream(ssc, hostname, port,StorageLevel.MEMORY_AND_DISK)

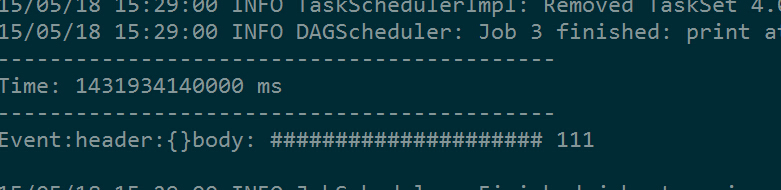

flumeStream.map(e => "Event:header:" + e.event.get(0).toString + "body: " + new String(e.event.getBody.array)).print()

ssc.start()

ssc.awaitTermination()

}

}

启动:

- 先启动Spark Streaming程序:

./spark-submit \ --name "spark-flume" \ --master spark://192.168.1.130:7077 \ --executor-memory 1G \ --class com.lxw.test.Spark_Flume \ /home/liuxiaowen/spark-flume.jar slave004.lxw1234.com 44444

- 再启动Flume agent:

flume-ng agent -n agent_lxw --conf . -f flume-lxw-conf.properties

效果示例:

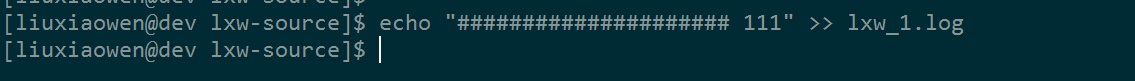

命令行往文件中增加数据

Spark and Flume

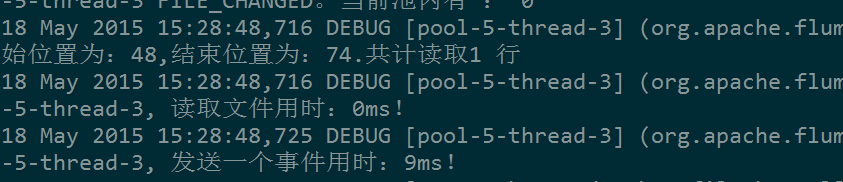

Flume监听到文件变化

Spark and Flume

Spark Streaming接收并处理数据

Spark and Flume

注意事项:

- Spark集群已经部署好,采用Standalone模式;

- Spark集群中每台节点需要将spark-streaming-flume_2.10-1.2.0.jar和flume-avro-source-1.4.0-cdh5.0.0.jar添加至SPARK_CLASSPATH中;

- Spark_Flume.scala在编译时候依赖:spark-assembly-1.2.0-hadoop2.3.0.jar、spark-streaming-flume_2.10-1.2.0.jar、flume-avro-source-1.4.0-cdh5.0.0.jar、flume-ng-sdk-1.4.0-cdh5.0.0.jar;

- 启动Spark Streaming时候传入的hostname (slave004.lxw1234.com),必须是Spark集群中的一台节点,Spark会在这台机器上启动NettyServer;

如果觉得本博客对您有帮助,请 赞助作者 。

转载请注明:lxw的大数据田地 » Spark Streaming+Flume对接实验